Apple is not behind on AI in one key way.

At this point it is a pretty ice cold take to state that Apple have lagged behind in AI, the long rumored Siri revamp is still nowhere to be seen, and at this point I'm not holding my breath on any improvement on that at WWDC in a few weeks time.

There is one key area though they have stealthily been encroaching on their competition, the hardware to run AI models themselves, Apple Silicon is one of the best and most performant chip lineups when it comes to running AI models and more importantly because of its low power draw the performance per watt and the performance per $ is encroaching on NVIDIA cards.

Take the M4 Mac Mini for example that whole machine runs for £599 and using Thunderbolt you can link multiple together to form a cluster and pool resources. This means for the price of one NVIDIA RTX 6000 card at £8999 (now an older card mind you) you can buy 4 of the even more performant, compared to the Mac Mini, Mac Studios with the combined total resources being 56 CPU cores 128 GPU cores and 144GB of unified memory. Meanwhile on the NVIDIA side you've still got to build a whole computer around this GPU to support it.

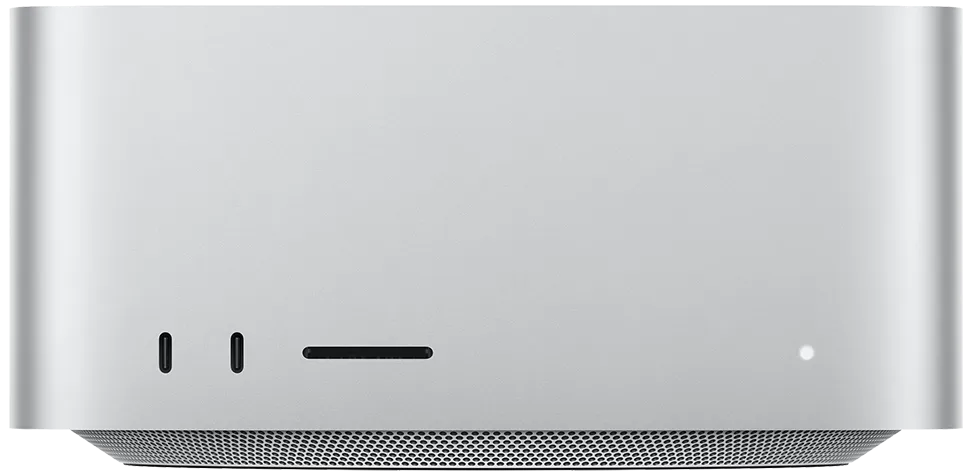

Apple Mac Studio

This is just the begging too NVIDIAs real answer to clustering 4 Mac Studios which if you where to go with the current range topping M3 Ultra chips with 256GB of RAM (You used to be able to buy up to 512GB but with recent events apple quietly removed this) will set you back a cool £7699 each (still less than the single NVIDIA card with no system to run it in) what NVIDIA wants you to buy instead is their DGX B200 "Deep Learning Appliance" for a wallet whacking £460k!

NVIDIA DGX B200

So for smaller businesses who don't have a cool half mill to drop on some thinking sand the Mac Studio clusters become a reasonable idea if you want to run some AI locally for your business or for local research purposes or for the home running Mac Minis is even more compelling, as was the recent trend of people buying Base model M4 Mac Minis to be their dedicated Openclaw environment.

This has been a secret under the radar success for apple so much so that they are struggling to keep up with the consistent demand for M4 Mac Minis at this late stage in the devices life cycle, assuming it does see a successor at WWDC or later in the year with a M5 and M5 Pro offering. Currently the M4 Mini is being sold above sticker price at many 3rd party retailers, if they have stock at all, and the lead time published previously stated 6-8 weeks for shipping just 1 week ago and now checking at the time of writing the base model has moved to "currently unavilable".

There has even been people who have found other ways to use M4 Mac Minis to do bulk AI processing work at scale for cheaper than other more conventional approaches. For example Marco Arment, developer of Overcast, put together a custom scalable cluster of M4 Mac Minis for transcription work on his app, the cluster reads the RSS feeds of all the podcasts available within Overcast and then when a new episode is found adds it to the queue for transcription once this is done its added to the metadata for that episode for the feed inside overcast for users to see when they pull the episode down to their phone.

This would be possible with an NVIDIA setup but for the scale to crunch through every single episode of every english speaking podcast the performance per $ of the Mac Mini cannot be beaten.

Last Edited: 1 month, 2 weeks ago.